0. 前言

在使用深度学习时候,我们可以有效地提取出我们想要的结果,但是常常会缺少深度信息(双目测景深会耗费大量的计算资源)。因此将激光雷达和单目摄像头相结合,可以有效的补充室内环境的深度信息,而目前3D的激光雷达成本高昂,这里提供一个2D激光雷达的解决方案。

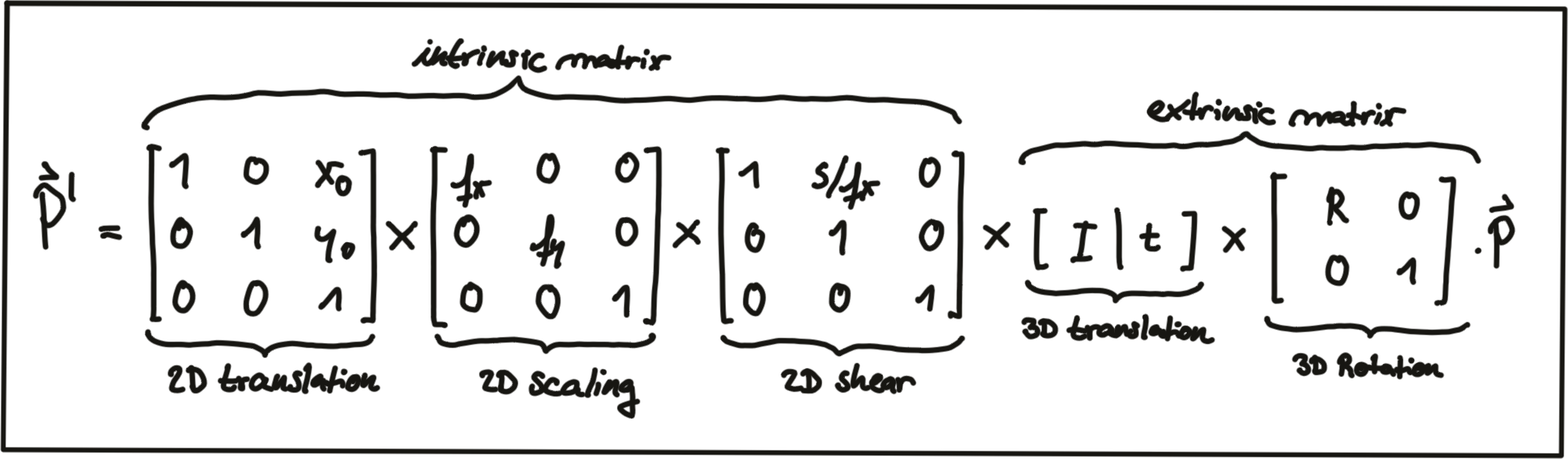

1. 相机坐标系变换

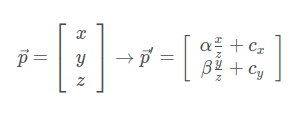

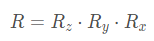

上文提过, 在相机世界中, 3D外界点转换到2D图像像素点转换方程是

我们可以通过相机的内在参数 intrinsic camera parameters实现这一转换. 但是我们不是通过视觉建立的地图,所以地图外部坐标系和相机的外部坐标系位置很有可能是不一样的。

而外参的坐标变化涉及平移和旋转操作, 我们需要将其应用于每个3D点。 问题在于我们得到的投影方程的问题是涉及到 z 的除法, 这使得它们是非线性的(3维), 从而使我们无法将它们转化为更方便的矩阵向量形式.

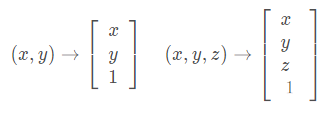

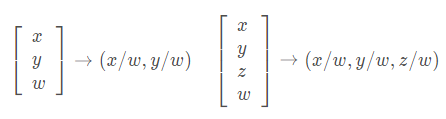

避免此问题的一种方法是同时更改激光雷达和相机的坐标系, 从原始的欧几里得坐标系转换为齐次坐标系的形式. 在两个欧几里得坐标系之间来回移动是一种非线性操作, 但是一旦我们处于齐次坐标系中, 投影变换将变为线性, 因此可以表示为简单的矩阵向量乘法. 两个坐标系之间的转换如下图所示。

欧几里得坐标->齐次坐标

齐次坐标->欧几里得坐标

2. 变换矩阵

内参矩阵

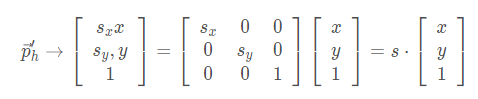

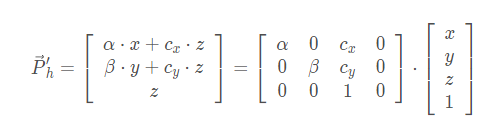

缩放(scale):

通过成分乘以尺度向量S实现缩放.缩放分量S已集成到内矩阵中K,所以在外参中不考虑。

下述方程从左到右依次是齐次坐标系的相机投影几何转换矩阵/ 相机内参数矩阵K/ 齐次坐标点

相机内参数以矩阵形式排列, 可以方便地以紧凑的方式表示针孔相机模型的属性.

内参矩阵告诉你在外部世界的点在经过外参矩阵变换之后, 是如何继续经过摄像机的镜头、并通过针孔成像和电子转化而成为像素点的.

外参矩阵

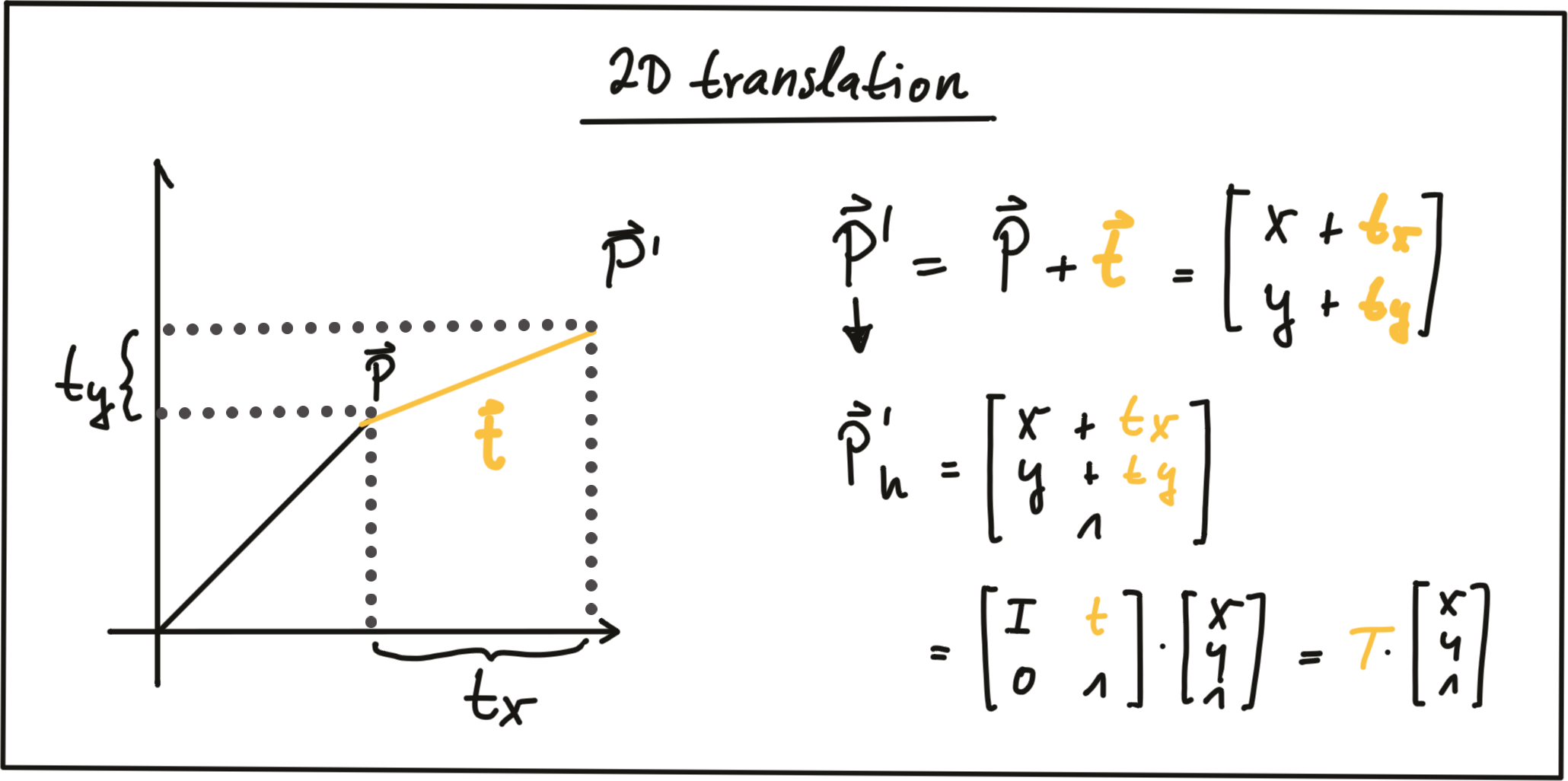

平移(translation):

通过添加平移向量t到P, 使得P点线性平移到新位置P’.

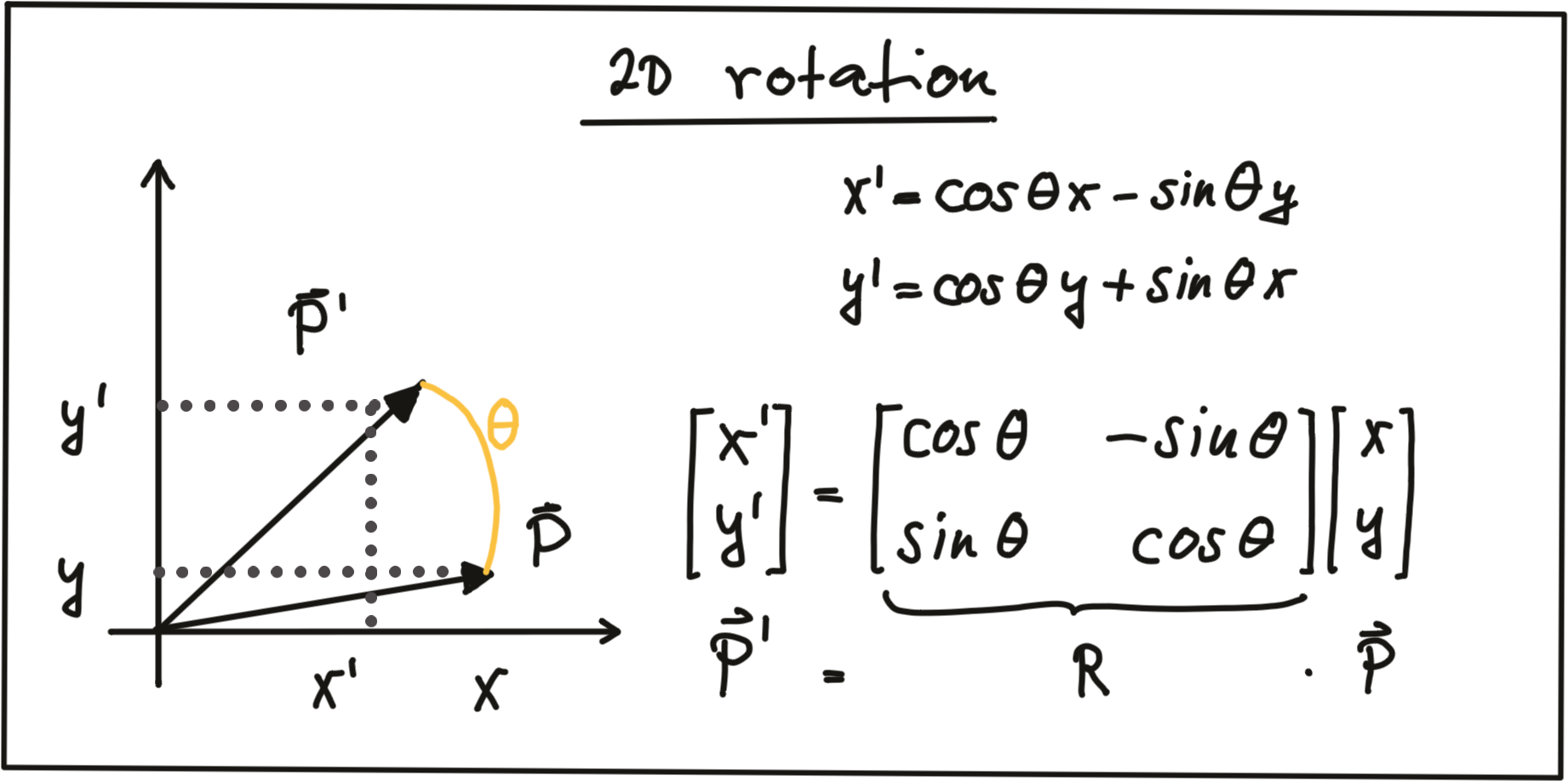

在齐次坐标中, 我们可以使用大小为N的单位矩阵I连接平移向量t表示.

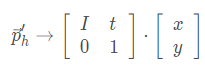

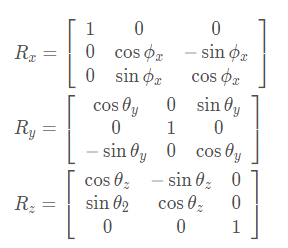

旋转

下图为点P在顺时针方向上的旋转的实现:

其中R被称为旋转矩阵. 在3D空间中, 点P的旋转是围绕x,y,z三个轴实现的, 因此可以表述为下面的旋转公式.

合在一起就是3D旋转公式:

3. 投影方程

通过将各个外参矩阵和内参矩阵进行级联, 实现了3D激光雷达到2D图像平面上的投影.

实现3D投影需要从完成这些坐标系的变换:

激光雷达世界坐标系->相机坐标系(激光雷达和相机都在车辆坐标系中进行位置校准, 从而互相关联)->像平面坐标系->像素坐标系.

坐标系转换:

欧几里得坐标系->齐次坐标系->欧几里得坐标系.

在完成欧几里得坐标转齐次坐标, 转换函数, 齐次坐标转欧几里得坐标这三步之后我们完成了激光雷达到相机的点投影, 从而获得了激光雷达与图像平面相交的区域.。同样3D激光雷达同理。

rp_segment.h

#include <iostream>

#include <fstream>

#include <vector>

#include <stdio.h>

#include <string>

#include <opencv2/opencv.hpp>

#include <ros/ros.h>

#include <math.h>

#include <Eigen/Dense>

#include <sensor_msgs/LaserScan.h>

#include <sensor_msgs/Image.h>

#include <cv_bridge/cv_bridge.h>

#include <geometry_msgs/Point.h>

#include <laser_segment/Insight_lidar_segments.h>

#include <laser_segment/Insight_lidar_segments.h>

#include <message_filters/subscriber.h>

#include <message_filters/time_synchronizer.h>

#include <message_filters/sync_policies/approximate_time.h>

using namespace std;

using namespace cv;

using namespace Eigen;

using namespace message_filters;

/* 雷达-相机外参,相机内参 */

cv::Mat tcl = (Mat_<double>(3,1) << 0.0259365,-0.0200982,-0.0914582);

cv::Mat Rcl = (Mat_<double>(3,3) << -0.0580782,-0.99819,-0.0155896,-0.0126269,

0.0163492,-0.999787,0.998232,-0.0578689,-0.0135535);

cv::Mat K = (Mat_<double>(3, 3) << 1398.526435675626, 0, 974.6271798798929,0, \

1399.471439435285, 533.3491663893035,0, 0, 1);

cv::Mat D = (Mat_<double>(4,1) << -0.101938, -0.0501564, 0.0323029, 0.0226299);

/*

//640x480

cv::Mat K_1 = (Mat_<double>(3, 3) <<601.1819494061277, 0, 329.1305015302001, 0.000000, \

600.0406404279748, 238.3837396948256, 0.000000, 0.000000, 1.000000);

cv::Mat D_1 = (Mat_<double>(4,1) << -0.0541737, -0.761986, 3.11624, -3.69709);

//1280x720

cv::Mat K_2 = (Mat_<double>(3, 3) <<907.3179347878757, 0, 622.1428470715612, 0.000000, \

910.7995408784911, 330.5347846342667, 0.000000, 0.000000, 1.000000);

cv::Mat D_2 = (Mat_<double>(4,1) << -0.120183, 0.0311701, -0.0129947, -0.00799496);

//1920x1080

cv::Mat K_3 = (Mat_<double>(3, 3) <<1398.526435675626, 0, 974.6271798798929,0, \

1399.471439435285, 533.3491663893035,0, 0, 1);

cv::Mat D_3 = (Mat_<double>(4,1) << -0.101938, -0.0501564, 0.0323029, 0.0226299);

*/

cv::Mat global_img_;

int begin_ray_id_;

int min_seg_points_;

string topic_detect_img_ = "cropped_detection_Image";

string topic_laser_seg_ = "insight_laser_seg";

std_msgs::Header global_header_;

typedef Eigen::Matrix < double, 3, 1> Vector3f;

typedef Eigen::Matrix < double, 4, 1> Vector4f;

typedef message_filters::sync_policies::ApproximateTime<laser_segment::Insight_lidar_segments,sensor_msgs::Image> sync_policy_classification;

//Rplidar A1 配置参数

double p_min_scanner_range_ = 0.15000000596;

double p_max_scanner_range_ = 6.0;

double p_scanner_angle_min_ = -3.12413907051;

double p_scanner_angle_max_ = 3.14159274101;

double p_scanner_angle_increment_ = 0.0174532923847;

int p_ranges_num_ = 360;

void reprojectTo_show(cv::Mat* img,std::vector<Eigen::Vector3f>* laser_points,std::vector<int>* laser_idx,const int& i);

void callback(const laser_segment::Insight_lidar_segmentsConstPtr& msg_segments,

const sensor_msgs::ImageConstPtr& msg_Img);

rp_segment.cpp

#include <include/rp_segment.h>

int main(int argc,char **argv)

{

ros::init(argc, argv, "laser_image");

ros::NodeHandle nh_("");

ros::NodeHandle nh_local_("~");

nh_local_.param<int>("begin_Rayid",begin_ray_id_,396);

nh_local_.param<int>("min_seg_Points",min_seg_points_,16);

ROS_INFO("start cropped_detection_Image & insight_laser_seg subscribe");

message_filters::Subscriber<laser_segment::Insight_lidar_segments> segment_sub(nh_, topic_laser_seg_, 1);

message_filters::Subscriber<sensor_msgs::Image> image_sub(nh_,topic_detect_img_, 1);

message_filters::Synchronizer<sync_policy_classification> sync(sync_policy_classification(10),segment_sub,image_sub);

sync.registerCallback(boost::bind(&callback, _1, _2)); // 回调

ros::spin();

ros::shutdown();

return 0;

}

void callback(const laser_segment::Insight_lidar_segmentsConstPtr& msg_segments,

const sensor_msgs::ImageConstPtr& msg_Img)

{

std::cout<<"----------------- Begin ------------------ "<<std::endl;

ROS_INFO("Get pairs");

global_header_ = msg_segments->header;

std::cout<<"time gap is : "<<msg_segments->header.stamp.now() - msg_Img->header.stamp.now()<<std::endl;

/*********************************************************************/

cv_bridge::CvImagePtr cv_ptr = cv_bridge::toCvCopy(msg_Img, "bgr8");

cv::Mat img = cv_ptr->image;

std::vector<laser_segment::Insight_lidar_segment> segs;

for(auto& seg : msg_segments->segments)

if(seg.ray_id.front() >= begin_ray_id_)

segs.push_back(seg);

std::cout<<"(callback) segments in_sight: "<<segs.size()<<std::endl;

if(segs.size() == 0)

ROS_INFO("No segment in sight");

else

{

for(int i = 0;i < segs.size();i++)

{

std::vector<Eigen::Vector3f> laser_points;

std::vector<int> laser_idx;

for(int j = 0;j < segs[i].ray_id.size();j++)

{

double angle = p_scanner_angle_min_ + segs[i].ray_id.at(j) * p_scanner_angle_increment_;

const auto& range = segs[i].ranges.at(j);

if (range >= p_min_scanner_range_ && range <= p_max_scanner_range_)

{

Eigen::AngleAxisf rotation(angle, Eigen::Vector3f::UnitZ());

Eigen::Vector3f point;

point << rotation * (range * Eigen::Vector3f::UnitX());

laser_points.push_back(point);

laser_idx.push_back(segs[i].ray_id.at(j));

}

}

if(laser_points.size() > 0)

{//反投影第i段分割

reprojectTo_show(&img,&laser_points,&laser_idx,i);

}

//ranges_.clear();

//ray_id_.clear();

laser_points.clear();

laser_idx.clear();

}

}

std::cout<<"----------------- End ------------------ "<<std::endl;

segs.clear();

cv::imshow("image_with_segments", img);

cv::waitKey(1);

}

void reprojectTo_show(cv::Mat* img,std::vector<Eigen::Vector3f>* laser_points,std::vector<int>* laser_idx,const int& seg_num) //指针传递

{

std::vector<Point2d> pts_uv;

std::vector<int> idx;

if(laser_points->size() == laser_idx->size() \

&& laser_idx->size() > 0 && laser_idx->size() <= 1081)

{

for (int i = 0; i < laser_points->size(); ++i)

{

const Eigen::Vector3f &point = (*laser_points)[i];

//指针传递的vector索引正确访问方式,".at()"亦可

Mat laser_point(3,1, CV_64FC1);

laser_point.at<double>(0,0) = point[0];

laser_point.at<double>(1,0) = point[1];

laser_point.at<double>(2,0) = point[2];

cv::Mat point_c = Rcl * laser_point + tcl;

if(point_c.at<double>(2,0) <= 0.) //why ???

continue;

point_c.at<double>(0,0) /= point_c.at<double>(2,0);

point_c.at<double>(1,0) /= point_c.at<double>(2,0);

point_c.at<double>(2,0) = 1.0;

cv::Mat uv = K * point_c;

if(uv.at<double>(0,0) >= 0 && uv.at<double>(0,0) <= 1920 && uv.at<double>(1,0) >= 0 \

&& uv.at<double>(1,0) <= 1080) //广角镜头畸变校正后的视野范围

{

Point2d pt_uv(uv.at<double>(0,0), uv.at<double>(1,0));

pts_uv.push_back(pt_uv);

idx.push_back((*laser_idx)[i]);

}

}

cout <<"Segment_"<<seg_num<<" has points ——— "<<pts_uv.size()<<endl;

if((seg_num > 0 && pts_uv.size() < min_seg_points_) || \

(seg_num == 0 && pts_uv.size() < min_seg_points_ / 2))

return;

else

{

cv::Point paint_point;

paint_point.x = pts_uv.back().x;

paint_point.y = pts_uv.back().y + 15;

string ss = std::to_string(idx.back())+" to "+std::to_string(idx.front());;

cv::putText(*img, ss, paint_point ,cv::FONT_HERSHEY_SIMPLEX, 0.5, CV_RGB(0,255,0),1, 8);

for (int j = 0; j < pts_uv.size(); ++j)

{

if(j == 0)

{

cv::circle(*img, pts_uv[j], 3, CV_RGB(0,255,0),2,8);

}

else if(j == pts_uv.size() - 1)

{

cv::circle(*img, pts_uv[j], 3, CV_RGB(0,255,0),2,8);

}

else

{

cv::circle(*img, pts_uv[j], 1, CV_RGB(0,255,0),-1,8);

//图像;圆心坐标;圆半径;圆颜色;圆线条的粗细,负数为填充效果;线条类型

// cv::putText(*img, std::to_string(idx[j]), paint_point ,cv::FONT_HERSHEY_SIMPLEX, 0.3, CV_RGB(0,0, 255), 1, 8);

}

}

paint_point.x = pts_uv.back().x;

paint_point.y = pts_uv.back().y - 15;

string sentance = "Seg_" + std::to_string(seg_num);

cv::putText(*img,sentance,paint_point,cv::FONT_HERSHEY_SIMPLEX, 0.5, CV_RGB(0,255,0),1, 8);

cv::line(*img,pts_uv.front(),pts_uv.back(),CV_RGB(255,0,0),1,8);

}

}

else

ROS_INFO("points size not correct");

pts_uv.clear();

idx.clear();

}

CMakeList.txt

cmake_minimum_required(VERSION 2.8.3)

add_compile_options(-std=c++11)

project(rp_segment_node)

find_package(Armadillo REQUIRED)

find_package(Boost 1.54.0 REQUIRED system)

find_package(catkin REQUIRED COMPONENTS

roscpp

rospy

roslib

std_msgs

sensor_msgs

cv_bridge

image_transport

geometry_msgs

visualization_msgs

darknet_ros_msgs

laser_segment

message_generation

)

find_package(Eigen3 REQUIRED)

find_package(OpenCV REQUIRED)

add_message_files(

FILES

)

## Generate added messages and services with any dependencies listed here

generate_messages(

DEPENDENCIES

std_msgs

geometry_msgs

)

catkin_package(

INCLUDE_DIRS include

LIBRARIES

CATKIN_DEPENDS roscpp std_msgs sensor_msgs geometry_msgs visualization_msgs laser_segment darknet_ros_msgs message_runtime

DEPENDS

)

###########

## Build ##

###########

include_directories(include ${catkin_INCLUDE_DIRS} ${OpenCV_INCLUDE_DIRS} ${EIGEN3_INCLUDE_DIRS} ${ARMADILLO_INCLUDE_DIRS} ${Boost_INCLUDE_DIRS})

add_executable(rp_segment_node src/rp_segment.cpp)

target_link_libraries(rp_segment_node ${catkin_LIBRARIES} ${OpenCV_LIBRARIES} ${EIGEN3_LIBRARIES} ${ARMADILLO_LIBRARIES} ${Boost_LIBRARIES})

package.xml

<?xml version="1.0"?>

<package>

<name>rp_segment</name>

<version>0.0.0</version>

<description>The rp_segment package</description>

<license>TODO</license>

<buildtool_depend>catkin</buildtool_depend>

<build_depend>cv_bridge</build_depend>

<build_depend>image_transport</build_depend>

<build_depend>roscpp</build_depend>

<build_depend>rospy</build_depend>

<build_depend>sensor_msgs</build_depend>

<build_depend>std_msgs</build_depend>

<build_depend>message_generation</build_depend>

<build_depend>roslib</build_depend>

<build_depend>visualization_msgs</build_depend>

<build_depend>geometry_msgs</build_depend>

<build_depend>laser_segment</build_depend>

<build_depend>darknet_ros_msgs</build_depend>

<run_depend>cv_bridge</run_depend>

<run_depend>image_transport</run_depend>

<run_depend>roscpp</run_depend>

<run_depend>roslib</run_depend>

<run_depend>rospy</run_depend>

<run_depend>std_msgs</run_depend>

<run_depend>sensor_msgs</run_depend>

<run_depend>geometry_msgs</run_depend>

<run_depend>visualization_msgs</run_depend>

<run_depend>message_runtime</run_depend>

<run_depend>laser_segment</run_depend>

<run_depend>darknet_ros_msgs</run_depend>

<export>

</export>

</package>

评论(3)

您还未登录,请登录后发表或查看评论