0. 简介

LinK3D、CSF、BALM这几个都是非常方便去插入到激光SLAM框架的。这里我们会分别从多个角度来介绍如何将每个框架插入到SLAM框架中

1. LinK3D:三维LiDAR点云的线性关键点表示

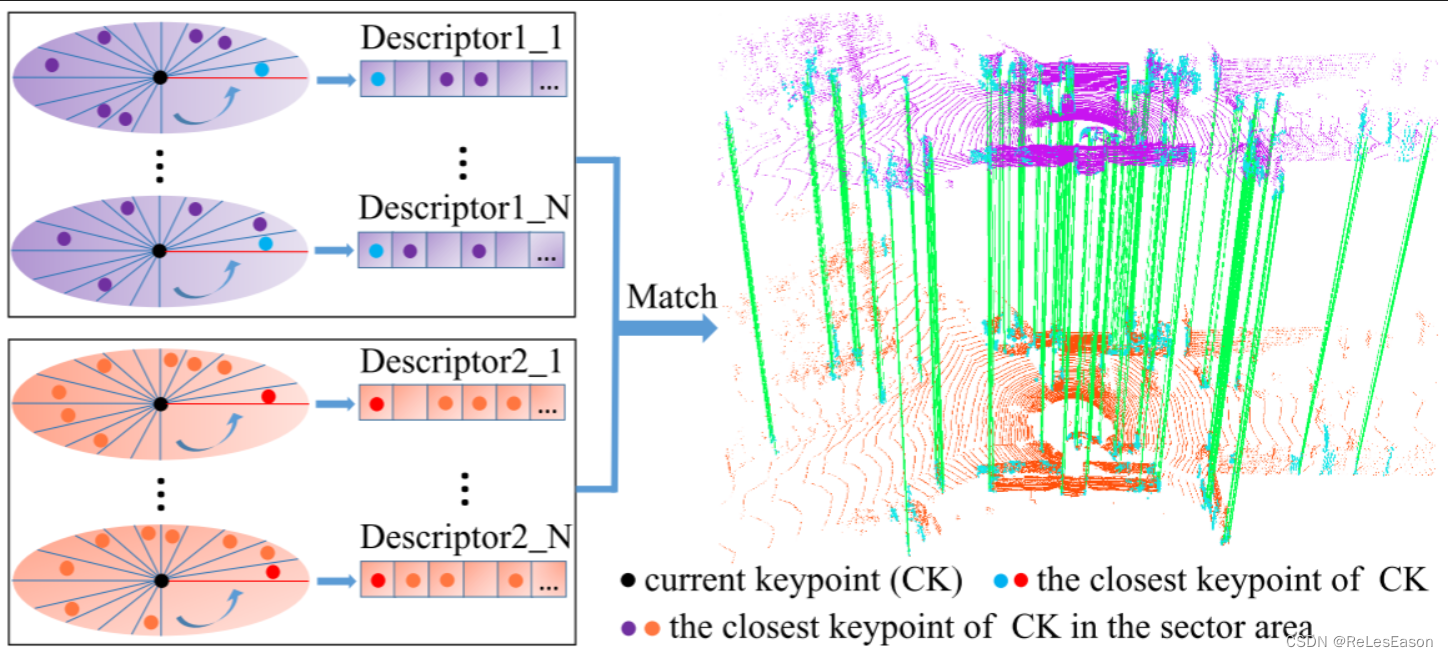

LinK3D的核心思想和基于我们的LinK3D的两个LiDAR扫描的匹配结果。绿色线是有效匹配。当前关键点(黑色,CK)的描述符用其相邻关键点来表示。描述符的每个维度对应于扇区区域。第一维度对应于当前关键点的最近关键点所在的扇区区域(蓝色和红色、CK的最近关键点),并且其他维度对应于以逆时针顺序布置的区域。如果在扇区区域中存在关键点,则搜索扇区区域中最近的关键点(紫色和橙色,扇区中CK的最近关键点)并将其用于表示描述符的对应维度。

2. Link3D数据植入

这里的Link3D数据植入其实在外围调用就这些内容,当中AggregationKeypoints_LinK3D存储的是存当前点云中的聚类后的关键点。而pCurrentFrame_LinK3D对应的则是点云帧。该函数中利用LinK3D仿函数执行了提取边缘点,聚类,计算描述子的操作。其实主要实现的都是LinK3D提取器。

//在这里植入LinK3D,把接收到的点云数据用LinK3D提取边缘点和描述子,发布关键点数据,打印输出描述子

//LinK3D提取器

BoW3D::LinK3D_Extractor* pLinK3dExtractor(new BoW3D::LinK3D_Extractor(nScans, scanPeriod_LinK3D, minimumRange, distanceTh, matchTh));

//创建点云帧,该函数中利用LinK3D仿函数执行了提取边缘点,聚类,计算描述子的操作

Frame* pCurrentFrame_LinK3D(new Frame(pLinK3dExtractor, plaserCloudIn_LinK3D));

//此时pCurrentFrame_LinK3D这个类指针中包含了边缘点,聚类,描述子的信息

//测试 输出关键点数量和第一个关键点信息 正常输出

// cout << "------------------------" << endl << "关键点数量:" << pCurrentFrame_LinK3D->mvAggregationKeypoints.size();

// cout << "第一个关键点信息x坐标" << pCurrentFrame_LinK3D->mvAggregationKeypoints[0].x;

//存当前点云中的聚类后的关键点

AggregationKeypoints_LinK3D->points.insert(AggregationKeypoints_LinK3D->points.end(), pCurrentFrame_LinK3D->mvAggregationKeypoints.begin(), pCurrentFrame_LinK3D->mvAggregationKeypoints.end());

//测试 输出点云中信息 也能正常输出

// cout << "------------------------" << endl << "关键点数量:" << AggregationKeypoints_LinK3D->points.size();

// cout << "第一个关键点信息x坐标" << AggregationKeypoints_LinK3D->points[0].x;

// 2.对描述子进行匹配 3.使用匹配对进行帧间icp配准 pPreviousFrame是上一个link3d Frame帧 pCurrentFrame_LinK3D是当前link3d Frame帧

// 获取上一帧和当前帧之间的匹配索引

vector<pair<int, int>> vMatchedIndex;

pLinK3dExtractor->match(pCurrentFrame_LinK3D->mvAggregationKeypoints, pPreviousFrame->mvAggregationKeypoints, pCurrentFrame_LinK3D->mDescriptors, pPreviousFrame->mDescriptors, vMatchedIndex);

//仿照BoW3D函数写一个帧间ICP匹配函数求出R,t

int returnValue = 0;

// 进行帧间ICP匹配 求当前帧到上一帧的位姿变换

// 这里求的R t是当前帧点云到上一帧点云的位姿变换

returnValue = pose_estimation_3d3d(pCurrentFrame_LinK3D, pPreviousFrame, vMatchedIndex, RelativeR, Relativet, pLinK3dExtractor);

//至此获得了当前帧点云到上一帧点云的位姿变换

//当前帧Frame用完以后,赋值给上一帧Frame,赋值前先把要丢掉的帧内存释放

//这里Frame里有成员指针,析构函数里delete成员指针

delete pPreviousFrame;

pPreviousFrame = pCurrentFrame_LinK3D;

//LinK3D 植入结束

点云帧配置如下,其实可以看到这里面没有太多的内容,主要还是调用Link3D中的void LinK3D_Extractor::operator()(pcl::PointCloud

//静态(全局?)变量要在这里初始化

long unsigned int Frame::nNextId = 0;

Frame::Frame(LinK3D_Extractor* pLink3dExtractor, pcl::PointCloud<pcl::PointXYZ>::Ptr pLaserCloudIn):mpLink3dExtractor(pLink3dExtractor)

{

mnId = nNextId++;

(*mpLink3dExtractor)(pLaserCloudIn, mvAggregationKeypoints, mDescriptors, mClusterEdgeKeypoints);

}

2. CSF“布料”滤波算法

然后下面就是CSF的处理,这里其实可以将内容加在BALM当中,Github。因为CSF其实作用是区分地面点的作用

//

CSF csf;

csf.params.iterations = 600;

csf.params.time_step = 0.95;

csf.params.cloth_resolution = 3;

csf.params.bSloopSmooth = false;

csf.setPointCloud(*laserCloudSurfLast);

// pcl::io::savePCDFileBinary(map_save_directory, *SurfFrame);

std::vector<int> groundIndexes, offGroundIndexes;

// 输出的是vector<int>类型的地面点和非地面点索引

pcl::PointCloud<pcl::PointXYZI>::Ptr groundFrame(new pcl::PointCloud<pcl::PointXYZI>);

pcl::PointCloud<pcl::PointXYZI>::Ptr offGroundFrame(new pcl::PointCloud<pcl::PointXYZI>);

csf.do_filtering(groundIndexes, offGroundIndexes);

pcl::copyPointCloud(*laserCloudSurfLast, groundIndexes, *groundFrame);

pcl::copyPointCloud(*laserCloudSurfLast, offGroundIndexes, *offGroundFrame);

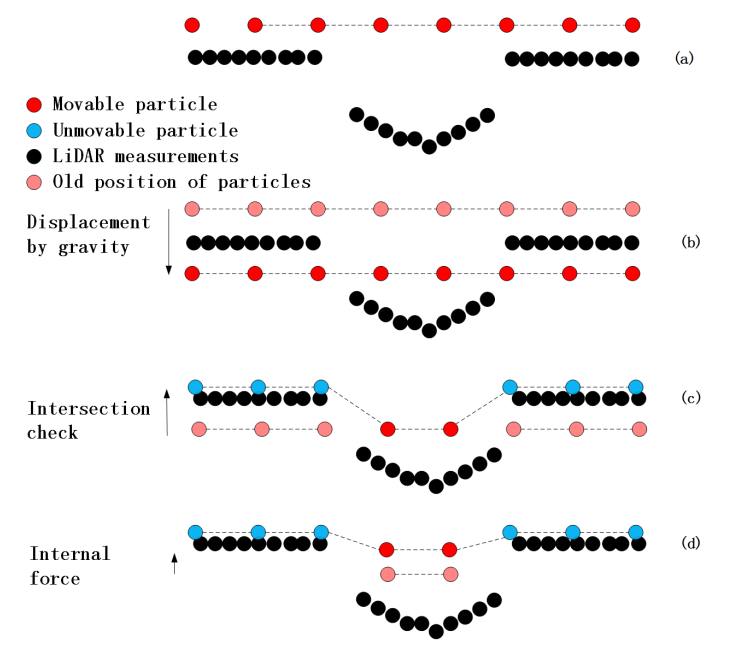

实际用一句话:把点云翻过来,罩上一块不同材质的布料,就可以得到地面了。参考实际物理的布,布料上的点之间存在不同的作用力,详细可以参考这篇文章:https://www.guyuehome.com/40977

3. BALM

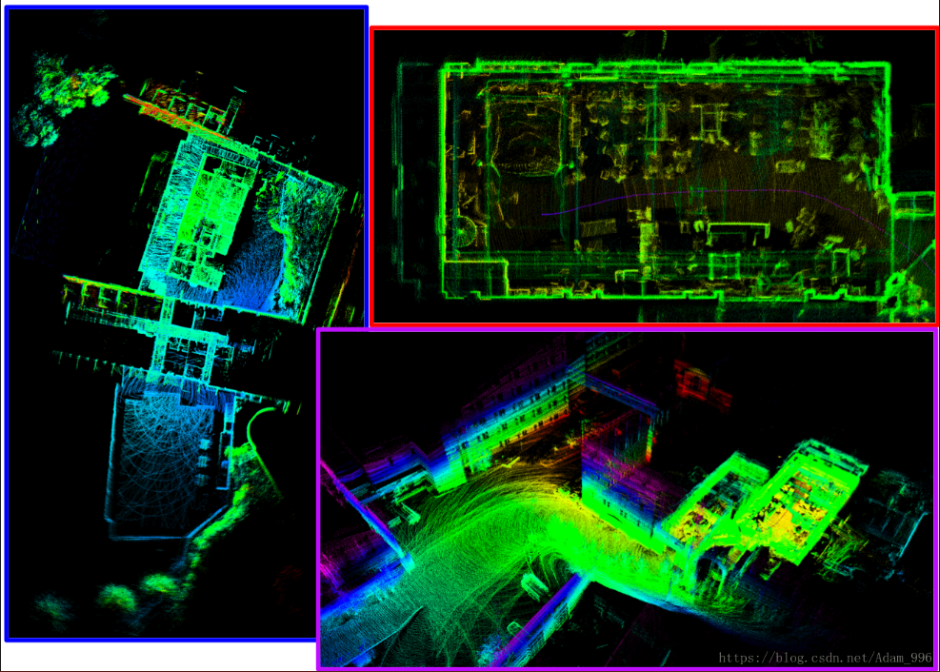

对于BALM其实主要分为三块,balm_front、scan2map、balm_back这三个流程。我们一开始使用的是ALOAM的前端来提取激光里程计信息,然后scan2map中加入CSF来进一步区分地面点。最后分成三类传入到BA约束当中https://blog.csdn.net/lovely_yoshino/article/details/133940976。

while(n.ok())

{

ros::spinOnce();

if(corn_buf.empty() || ground_buf.empty() || odom_buf.empty() || offground_buf.empty())

{

continue;

}

mBuf.lock();

uint64_t time_corn = corn_buf.front()->header.stamp.toNSec();

uint64_t time_ground = ground_buf.front()->header.stamp.toNSec();

uint64_t time_odom = odom_buf.front()->header.stamp.toNSec();

uint64_t time_offground = offground_buf.front()->header.stamp.toNSec();

if(time_odom != time_corn)

{

time_odom < time_corn ? odom_buf.pop() : corn_buf.pop();

mBuf.unlock();

continue;

}

if(time_odom != time_ground)

{

time_odom < time_ground ? odom_buf.pop() : ground_buf.pop();

mBuf.unlock();

continue;

}

if(time_odom != time_offground)

{

time_odom < time_ground ? odom_buf.pop() : ground_buf.pop();

mBuf.unlock();

continue;

}

ros::Time ct(ground_buf.front()->header.stamp);

pcl::PointCloud<PointType>::Ptr pl_ground_temp(new pcl::PointCloud<PointType>);

pcl::PointCloud<PointType>::Ptr pl_edge_temp(new pcl::PointCloud<PointType>);

pcl::PointCloud<PointType>::Ptr pl_offground_temp(new pcl::PointCloud<PointType>);

rosmsg2ptype(*ground_buf.front(), *pl_ground);

rosmsg2ptype(*corn_buf.front(), *pl_corn);

rosmsg2ptype(*offground_buf.front(), *pl_offground);

//pcl::io::savePCDFileBinary("/home/wb/FALOAMBA_WS/wb/Map/map.pcd", *pl_ground);

//pl_ground还有用,所以这里复制出一个新点云

*pl_ground_temp = *pl_ground;

*pl_edge_temp = *pl_corn;

*pl_offground_temp = *pl_offground;

corn_buf.pop(); ground_buf.pop(); offground_buf.pop();

q_odom.w() = odom_buf.front()->pose.pose.orientation.w;

q_odom.x() = odom_buf.front()->pose.pose.orientation.x;

q_odom.y() = odom_buf.front()->pose.pose.orientation.y;

q_odom.z() = odom_buf.front()->pose.pose.orientation.z;

t_odom.x() = odom_buf.front()->pose.pose.position.x;

t_odom.y() = odom_buf.front()->pose.pose.position.y;

t_odom.z() = odom_buf.front()->pose.pose.position.z;

odom_buf.pop();

mBuf.unlock();

// T_curr2last = T_curr2w * T_last2w¯¹

Eigen::Vector3d delta_t(q_last.matrix().transpose()*(t_odom-t_last));

Eigen::Quaterniond delta_q(q_last.matrix().transpose() * q_odom.matrix());

q_last = q_odom;

t_last = t_odom;

// T_curr2last * I

t_gather_pose = t_gather_pose + q_gather_pose * delta_t;

q_gather_pose = q_gather_pose * delta_q;

if(jump_flag < skip_num)

{

jump_flag++;

continue;

}

jump_flag = 0;

if(plcount == 0)// 第一帧

{

// 第一帧:T_curr2w = T_curr2last

q_poses.push_back(q_gather_pose);

t_poses.push_back(t_gather_pose);

}

else// 第二帧

{

// T_1_2_0 * T_2_2_1 = T_2_2_0

// T_2_2_0 * T_3_2_2 = T_3_2_0

q_poses.push_back(q_poses[plcount-1]*q_gather_pose);

t_poses.push_back(t_poses[plcount-1] + q_poses[plcount-1] * t_gather_pose);

}

parray.header.stamp = ct;

geometry_msgs::Pose apose;

apose.orientation.w = q_poses[plcount].w();

apose.orientation.x = q_poses[plcount].x();

apose.orientation.y = q_poses[plcount].y();

apose.orientation.z = q_poses[plcount].z();

apose.position.x = t_poses[plcount].x();

apose.position.y = t_poses[plcount].y();

apose.position.z = t_poses[plcount].z();

// ---------------------------- 当前帧位姿(优化前的)--------------------------------

nav_msgs::Odometry laser_odom;

laser_odom.header.frame_id = "camera_init";

laser_odom.child_frame_id = "aft_BA";

laser_odom.header.stamp = ct;

laser_odom.pose.pose.orientation.x = apose.orientation.x;

laser_odom.pose.pose.orientation.y = apose.orientation.y;

laser_odom.pose.pose.orientation.z = apose.orientation.z;

laser_odom.pose.pose.orientation.w = apose.orientation.w;

laser_odom.pose.pose.position.x = apose.position.x;

laser_odom.pose.pose.position.y = apose.position.y;

laser_odom.pose.pose.position.z = apose.position.z;

//发布优化前的位姿

pub_odom.publish(laser_odom);

//发布坐标关系

static tf::TransformBroadcaster br;

tf::Transform transform;

tf::Quaternion q;

transform.setOrigin(tf::Vector3(apose.position.x, apose.position.y, apose.position.z));

q.setW(apose.orientation.w);

q.setX(apose.orientation.x);

q.setY(apose.orientation.y);

q.setZ(apose.orientation.z);

transform.setRotation(q);

br.sendTransform(tf::StampedTransform(transform, laser_odom.header.stamp, "camera_init", "aft_BA"));

parray.poses.push_back(apose);

// 发布优化前的位姿

pub_pose.publish(parray);

pl_ground_buf.push_back(pl_ground_temp);

pl_edge_buf.push_back(pl_edge_temp);

pl_offground_buf.push_back(pl_offground_temp);

评论(0)

您还未登录,请登录后发表或查看评论